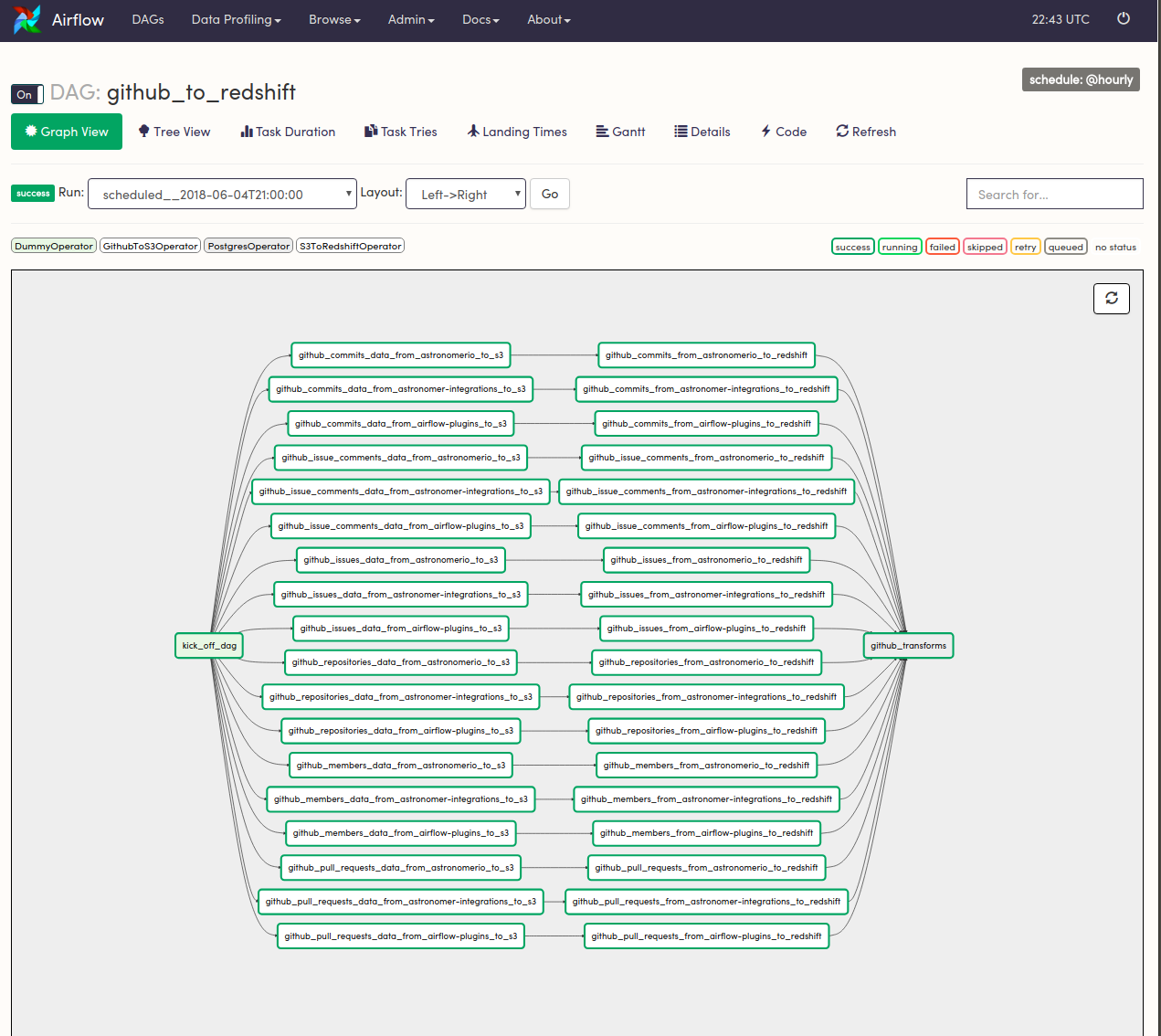

This will update the metadata database and stop downstream tasks from running if that is how you have defined dependencies in your DAG. Mark Failed: Changes the task's status to failed.You can choose to also clear upstream or downstream tasks in the same DAG, or past or future task instances of that task. This is one way of manually re-running a task (and any downstream tasks, if you choose). Clear: Removes that task instance from the metadata database.You have the ability to ignore dependencies and the current task state when you do this. Run: Manually runs a specific task in the DAG.The actions available for the task instance are: Filter Upstream: Updates the Graph View to show only the task selected and any upstream tasks.List Instances, all runs: Shows a historical view of task instances and statuses for that particular task.XCom: Shows XComs created by that particular TaskInstance.Log: Shows the logs of that particular TaskInstance.Rendered Template: Shows the task's metadata after it has been templated.Task Instance Details: Shows the fully rendered task - an exact summary of what the task does (attributes, values, templates, etc.).Specifically, the additional views available are: When running the DAG, toggle Auto-refresh to see the status of the tasks update in real time.Ĭlick a specific task in the graph to access additional views and actions for the task instance. This view is particularly useful when reviewing and developing a DAG. The Graph view shows a visualization of the tasks and dependencies in your DAG and their current status for a specific DAG run.

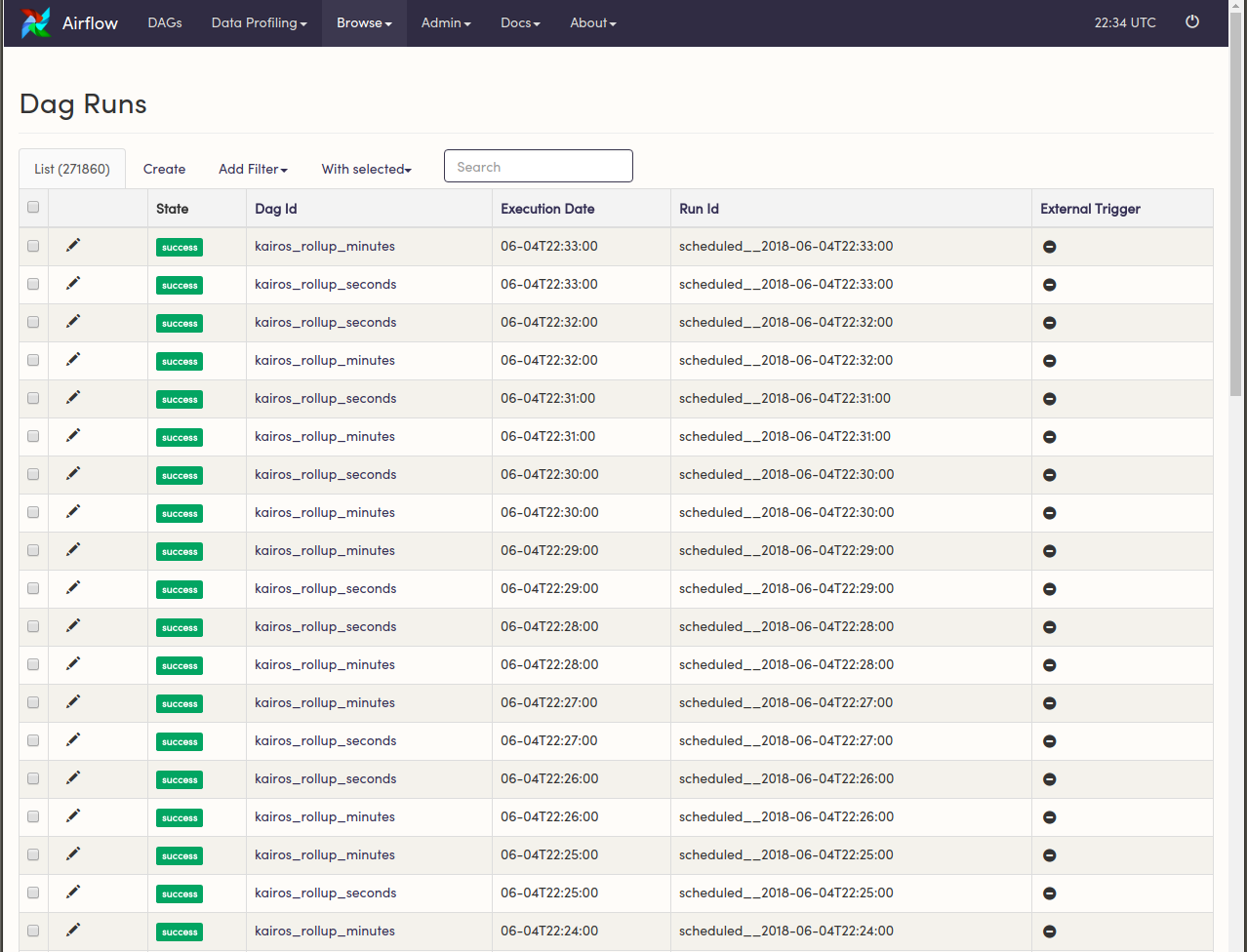

To see more information about a specific DAG, click its name or use one of the links. Navigate quickly to other DAG-specific pages from the Links section.Trigger, refresh, or delete a DAG with the buttons in the Actions section.Filter the list of DAGs to show active, paused, or all DAGs.Pause/unpause a DAG with the toggle to the left of the DAG name.To see the status of the DAGs update in real time, toggle Auto-refresh (added in Airflow 2.4). It shows a list of all your DAGs, the status of recent DAG runs and tasks, the time of the last DAG run, and basic metadata about the DAG like the owner and the schedule. The DAGs view is the landing page when you sign in to Airflow. To get the most out of this guide, you should have an understanding of: Other than some modified colors and an additional Astronomer tab, the UI is the same as that of OSS Airflow.

If you're using an older version of the UI, see Upgrading from 1.10 to 2.Īll images in this guide were taken from an Astronomer Runtime Airflow image. If you're not already using Airflow and want to get it up and running to follow along, see Install the Astro CLI to quickly run Airflow locally. Each section of this guide corresponds to one of the tabs at the top of the Airflow UI. This guide is an overview of some of the most useful features and visualizations in the Airflow UI. The UI is a useful tool for understanding, monitoring, and troubleshooting your pipelines. I’d love to get some feedback on how I can make this work.A notable feature of Apache Airflow is the user interface (UI), which provides insights into your DAGs and DAG runs. With DAG('test_keyvault_dag', start_date=datetime(2021, 3, 3), schedule_interval=None) as dag: Variable = AzureKeyVaultBackend.get_variable(kwargs) Here’s what I have in my dag file: from _operator import PythonOperatorįrom _hook import BaseHookįrom _key_vault import AzureKeyVaultBackend env file): FROM quay.io/astronomer/ap-airflow:2.0.0-buster-onbuildĮNV AZURE_CLIENT_SECRET=$AZURE_CLIENT_SECRETĮNV AIRFLOW_SECRETS_BACKEND=".secrets.azure_key_vault.AzureKeyVaultBackend"ĮNV AIRFLOW_SECRETS_BACKEND_KWARGS='' Here’s what I have in my docker file (please note that I have the AZURE_CLIENT_ID, AZURE_TENANT_ID, AZURE_CLIENT_SECRET & AZURE_VAULT_URL saved in my. So far it’s not working yet (for context, I’m testing this locally). Documentation found here: Azure Identity client library for Python - Azure SDK for Python 2.0.0 documentation I’m using the “Service Principal with Secret” credentials to authenticate with DefaultAzureCredential() method. I tried to follow a similar approach from your documentation on Hashicorp Vault & AWS SSM Parameter Store: Īnd this documentation from Airflow: Azure Key Vault Backend - apache-airflow-providers-microsoft-azure Documentation. I’m trying to find a way to retrieve azure keyvault secrets on my Astronomer airflow instance.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed